A Hot Topic: Why The AI Datacenter Boom Depends On Improved Chip Cooling

Lack of effective cooling is emerging as a key limiting factor for datacenters and the AI GPUs that run them. High powered GPUs generate a lot of heat, and getting rid of that heat is a major problem. Not only is cooling expensive – some 40% of the energy used in a data centre is for cooling – but ineffective cooling limits the power that chips can run at and reduces their lifetime significantly; not great when they are so expensive! This article explores possible solutions to this problem, and how M-Spin’s materials innovations can help.

What limits what a datacenter can do?

The obvious answer to this question is the raw power of the processing units (typically GPUs); in this case, however, the obvious answer is not the full story. In actuality, high-end processors have now become so powerful they are limited by not only how fast they can run but how hot they get while running. If running at 100%, high-end chips can easily exceed 150 °C, with severe consequences for their lifespan. In practice, output is throttled so that temperatures rise don’t rise above about 100 °C. Such protective measures can only do so much though, and consequently chip lifetimes in datacenters are very short (typically between 1 to 3 years). There’s a reason why high-performance gaming laptops recommend you buy a cooling pads to go with them – they enable the processor to run harder for longer.

The Burning Issue of Thermal Management

Why is temperature such a killer for electronics?

Electronics consume more power when they’re hot, and their components burn out faster: in one study, a temperature rise of 30 °C increased power consumption by 14% and halved the operating lifetime from 10 to 5 years [1].

Counterintuitively, poor thermal management increases the energy needed to keep electronics cool, as heat is inefficiently removed from hotspots. Older, inefficient datacenters use up to 40% of their energy on cooling whereas newer centers can lower this below 20%. [2,3] Datacenters are expected to drive vast increases in energy consumption worldwide, as AI usage grows across society and industry – therefore, cooling improvements will lower strain on electricity grids and reduce carbon emissions.

Datacenters also consume a lot of water, which is increasingly critical in an “era of global water bankruptcy” (UN). A small 1 MW datacenter can use 26 million litres of water a year for cooling alone, without even considering the water consumed by energy generation (Fossil and nuclear energy require cooling water too!) [4]. Improving datacenter cooling and transitioning to renewable energy sources would be a win-win for water consumption in a water-stressed world.

Keeping It Cool with Heat Sinks

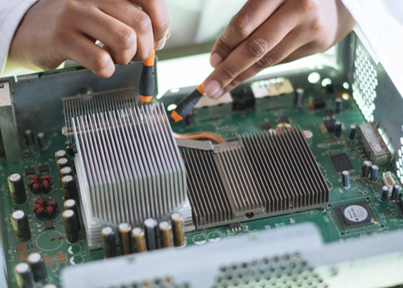

So, how can we keep the chips cool? Traditionally the answer has been heat sinks - large slabs of metal contacting the chip. The slab absorbs the heat and transfers it to fins that allow air flow to take the heat away. However, modern GPUs generate so much heat that even a very thick slab of metal contacting the chip cannot absorb and transfer heat fast enough to prevent extreme temperature rises.

Other approaches include using liquids flowing through microchannels or even fully immersing the systems in a cooling fluid. While such approaches can be effective they are expensive, and managing the circulation of large amounts of fluids and preventing leaks can be a challenge. There is clearly a need for a cost-effective solution for cooling, and for this, vapour chambers may tick all the boxes.

Two-Phase solutions – Vapour chambers

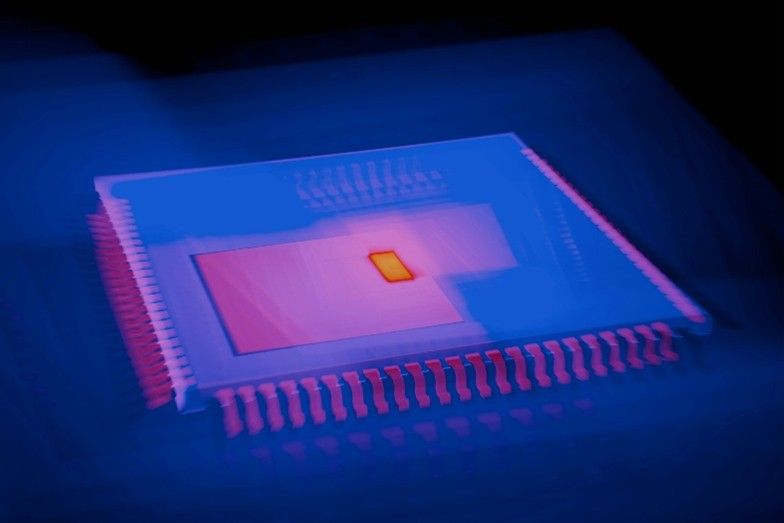

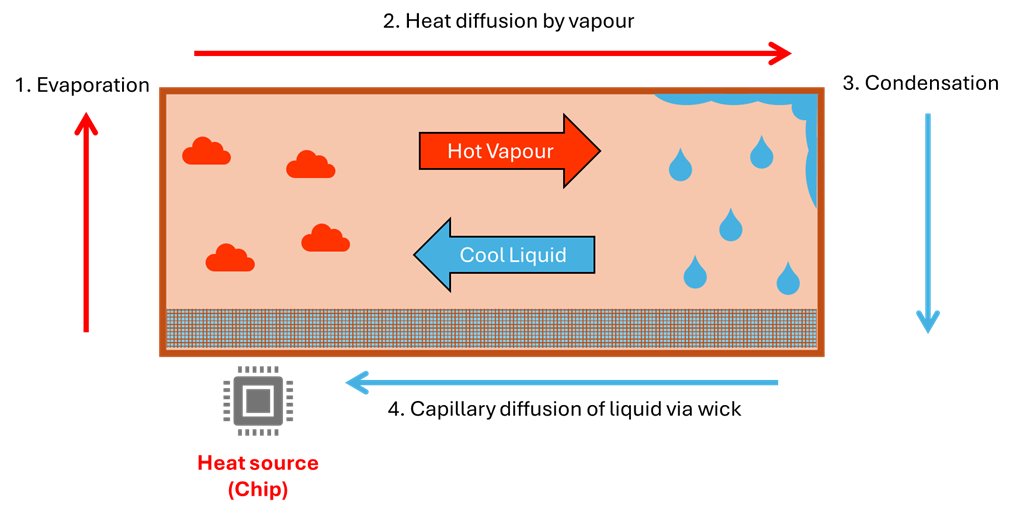

Vapour chambers (VCs) are a more recent invention that help spread heat over a wide area so it can be more effectively removed by a heat sink. VCs contain a liquid sealed within a vacuum chamber, and the liquid evaporates in contact with a heat source and spreads along the chamber, where it condenses and transfers heat. VCs are ubiquitous in high-end GPUs to improve heat spread and removal – and yet they can be improved further by looking at one crucial component – the wick.

The bottleneck of vapour chambers is the wick - it returns the condensed vapour back to the hotspot, where it can re-evaporate and continue the cooling cycle. The wick is a typically a porous metal that facilitates the solvent movement by capillary forces – in the same way that a plant’s roots draw water up into the stem and upwards towards the leaves and flower.

The M-Spin Difference: Nanofibrous Metals with Ultrafast Wicking

M-Spin’s nanofibrous metals offer a step-change for VC wicks with enhanced coolant transport. An ideal wick combines high porosity, small pore size and high surface area in a small layer (< 50 µm). Current materials cannot satisfy all criteria simultaneously – whereas the nanofibrous metals’ blend of high porosity, high surface area, and thickness are perfectly optimised for this purpose.

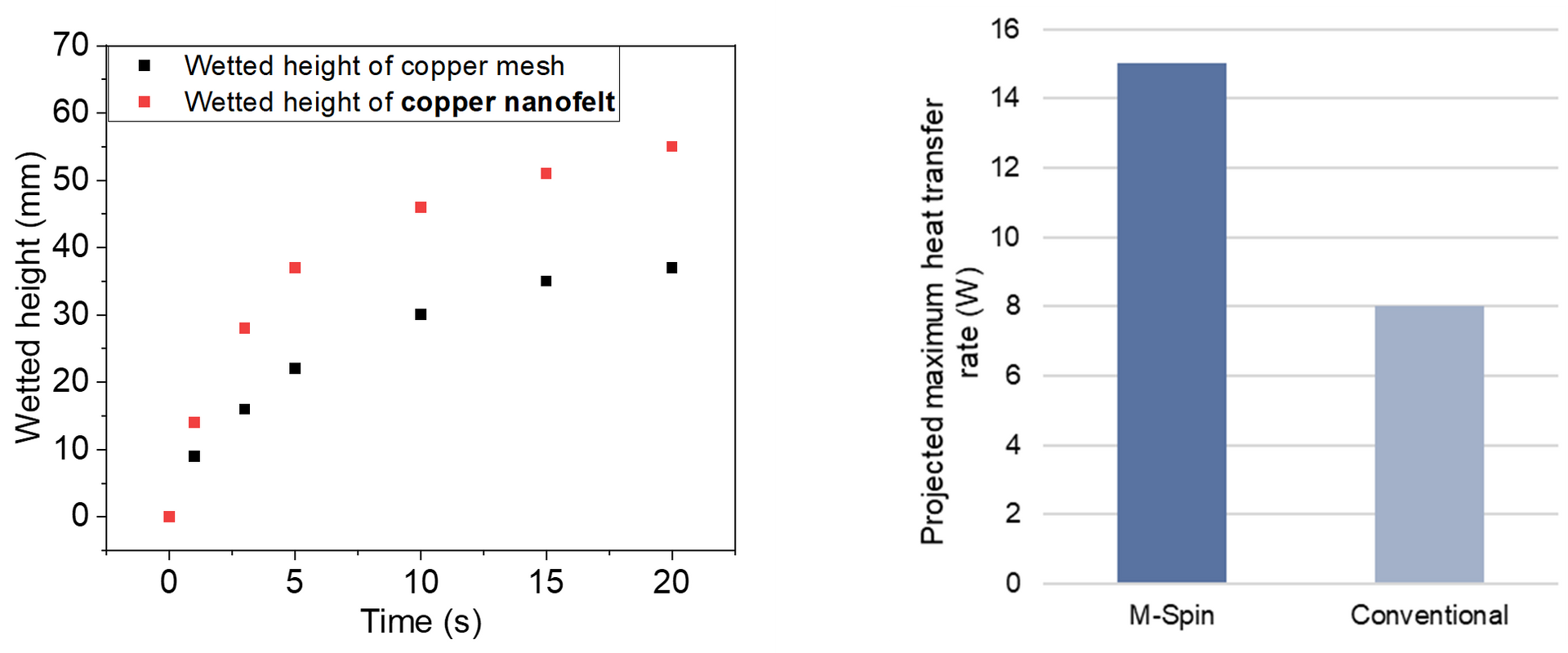

M-Spin have demonstrated a 50% increase in solvent wicking compared to conventional copper mesh, which translates into a projected >2x increase in cooling performance. This advance enables AI chip designers to have more stable high-power operation using thinner and more compact designs, while lowering energy costs and prolonging chip lifetime.

The Verdict – Cool It or Lose It

With the rapid expansion of AI, there is a significant economic and environmental value to reducing power and water consumption of datacenters, and prolonging the life of their processors.

M-Spin’s nanofibrous metals in vapour chamber wicks remove the need to compromise between chip output and heat generation, allowing datacenters to run their processors harder while consuming less power and water. By focusing on thermal management, we allow datacenters to meet tomorrow’s demands without sacrificing sustainability.

References